Benchmarks

Benchmarks#

Benchmarks are used to verify that a solver solves the problem correctly, i.e., to verify correctness of a code.1 Over the past decades, the geodynamics community has come up with a large number of benchmarks.

Depending on the goals of their original inventors, they describe stationary problems in which only the solution of the flow problem is of interest (but the flow may be compressible or incompressible, with constant or variable viscosity, etc), or they may actually model time-dependent processes. Some of them have solutions that are analytically known and can be compared with, while for others, there are only sets of numbers that are approximately known. We have implemented a number of them in ASPECT to convince ourselves (and our users) that ASPECT indeed works as intended and advertised. Some of these benchmarks are discussed below. Numerical results for several of these benchmarks are also presented in a number of papers (such as Fraters et al. [2019], Heister et al. [2017], Kronbichler et al. [2012], Tosi et al. [2015]) in much more detail than shown here.

Before going on with showing these benchmarks, let us mention that the data shown below (and in the papers mentioned above) reflect the state of ASPECT at a particular time. On the other hand, ASPECT has become more accurate and faster over time, for example by implementing better stabilization schemes for the advection equations and improving assembly and solver times. We occasionally update sections of the manual, but when reading through the sections on individual benchmarks below, it is worthwhile keeping in mind that ASPECT may yield different (and often better) results than the one shown.

- Running benchmarks that require code

- Onset of convection benchmark

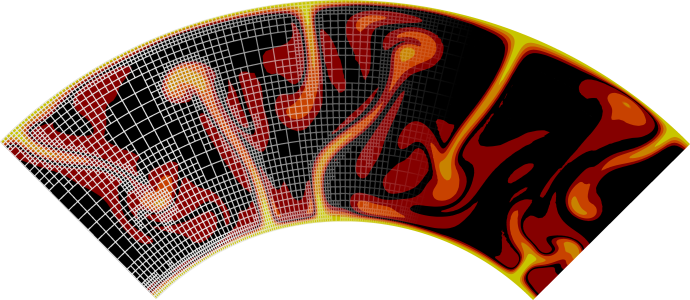

- The van Keken thermochemical composition benchmark

- Computation of the van Keken Problem with the Volume-of-Fluid Interface Tracking Method

- The Bunge et al. mantle convection experiments

- The Rayleigh-Taylor instability

- Polydiapirism

- The sinking block benchmark

- The SolCx Stokes benchmark

- The SolKz Stokes benchmark

- The “inclusion” Stokes benchmark

- The Burstedde variable viscosity benchmark

- The slab detachment benchmark

- The hollow sphere benchmark

- The 2D annulus benchmark

- The rigid shear benchmark

- The “Stokes’ law” benchmark

- Viscosity grooves benchmark

- Latent heat benchmark

- The 2D cylindrical shell benchmarks by Davies et al.

- The Crameri et al. benchmarks

- The solitary wave benchmark

- Benchmarks for operator splitting

- The Tosi et al. benchmarks

- Layered flow with viscosity contrast

- Donea & Huerta 2D box geometry benchmark

- Advection stabilization benchmarks

- Yamauchi & Takei anelastic shear wave velocity-temperature conversion benchmark

- Thin shell gravity benchmark

- Thick shell gravity benchmark

- 2D Lithosphere flexure benchmark with infill

- Gravity field generated by mantle density variations

- Brittle thrust wedges benchmark

- Compressibility formulation benchmarks

- Entropy adiabat benchmark

- Geoid Spectral Comparison

- Newton Solver Benchmark Set - Nonlinear Channel Flow

- Newton Solver Benchmark Set - Spiegelman et at. (2016)

- Newton Solver Benchmark Set - Tosi et at. (2015)

- The NSinker benchmark

- The Spherical Shell NSinker benchmark

- Time dependent annulus benchmark

- The viscoelastic plastic shear bands benchmarks

- 1

Verification is the first half of the verification and validation (V&V) procedure: verification intends to ensure that the mathematical model is solved correctly, while validation intends to ensure that the mathematical model is correct. Obviously, much of the aim of computational geodynamics is to validate the models that we have.